By Terry Speed

Most scientists will be familiar with the use of Pearson's correlation coefficient r to measure the strength of association between a pair of variables: for example, between the height of a child and the average height of their parents (r ≈ 0.5; see the figure, panel A), or between wheat yield and annual rainfall (r ≈ 0.75, panel B). However, Pearson's r captures only linear association, and its usefulness is greatly reduced when associations are nonlinear. What has long been needed is a measure that quantifies associations between variables generally, one that reduces to Pearson's in the linear case, but that behaves as we'd like in the nonlinear case. Researchers introduce the maximal information coefficient, or MIC, that can be used to determine nonlinear correlations in data sets equitably.

The common correlation coefficient r was invented in 1888 by Charles Darwin's half-cousin Francis Galton. Galton's method for estimating r was very different from the one we use now, but was amenable to hand calculation for samples of up to 1000 individuals. Francis Ysidro Edgeworth and later Karl Pearson gave us the modern formula for estimating r, and it very definitely required a manual or electromechanical calculator to convert 1000 pairs of values into a correlation coefficient. In marked contrast, the MIC requires a modern digital computer for its calculation; there is no simple formula, and no one could compute it on any calculator. This is another instance of computer-intensive methods in statistics.

It is impossible to discuss measures of association without referring to the concept of independence. Events or measurements are termed probabilistically independent if information about some does not change the probabilities of the others. The outcomes of successive tosses of a coin are independent events: Knowledge of the outcomes of some tosses does not affect the probabilities for the outcomes of other tosses. By convention, any measure of association between two variables must be zero if the variables are independent. Such measures are also called measures of dependence. There are several other natural requirements of a good measure of dependence, including symmetry, and statisticians have struggled with the challenge of defining suitable measures since Galton introduced the correlation coefficient. Many novel measures of association have been invented, including rank correlation; maximal linear correlation after transforming both variables, which has been rediscovered many times since; the curve-based methods reviewed in; and, most recently, distance correlation.

To understand where the MIC comes from, we need to go back to Claude Shannon, the founder of information theory. Shannon defined the entropy of a single random variable, and laid the groundwork for what we now call the mutual information, MI, of a pair of random variables. This quantity turns out to be a new measure of dependence and was first proposed as such in 1957. Reshef et al.'s MIC is the culmination of more than 50 years of development of MI.

What took so long, and wherein lies the novelty of MIC? There were three difficulties holding back MI's acceptance as the right generalization of the correlation coefficient. One was computational. It turns out to be surprisingly tricky to estimate MI well from modest amounts of data, mainly because of the need to carry out two-dimensional smoothing and to calculate logarithms of proportions. Second, unlike the correlation coefficient, MI does not automatically come with a standard numerical range or a ready interpretation of its values. A value of r = 0.5 tells us something about the nature of a cloud of points, but a value of MI = 2.2 does not. The formula [1 − exp(−2MI)]1/2 in (10) satisfies all the requirements for a good measure of dependence, apart from ease of computation, and ranges from 0 to 1 as we go from independence to total dependence. But Reshef et al. wanted more, and this takes us to the heart of MIC. Although r was introduced to quantify the association between two variables evident in a scatter plot, it later came to play an important secondary role as a measure of how tightly or loosely the data are spread around the regression line(s). More generally, the coefficient of determination of a set of data relative to an estimated curve is the square of the correlation between the data points and their corresponding fitted values read from the curve. In this context, Reshef et al. want their measure of association to satisfy the criterion of equitability, that is, to assign similar values to “equally noisy relationships of different types.” MI alone will not satisfy this requirement, but the three-step algorithm leading to MIC does.

Is this the end of the Galton-Pearson correlation coefficient r? Not quite. A very important extension of the linear correlation rXY between a pair of variables X and Y is the partial (linear) correlation rXY.Z between X and Y while a third variable, Z, is held at some value. In the linear world, the magnitude of rXY.Z does not depend on the value at which Z is held; in the nonlinear world, it may, and that could be very interesting. Thus, we need extensions of MIC(X,Y) to MIC(X,Y|Z). We will want to know how much data are needed to get stable estimates of MIC, how susceptible it is to outliers, what three- or higher-dimensional relationships it will miss, and more. MIC is a great step forward, but there are many more steps to take.

See the original article in Science

Friday, 16 December 2011

Wednesday, 7 December 2011

Layer by layer

By Nicola McCarthy

The view that cancer is purely a genetic disease has taken a battering over recent years, perhaps most extensively from the recent discovery that between transcription and translation sits a whole host of regulatory RNAs, chiefly in the guise of microRNAs (miRNAs). Now, we can add yet another layer of regulation: the evidence from three papers that protein-coding and non-coding RNAs influence the interaction of miRNAs with their target RNAs.

Pier Paolo Pandolfi and colleagues had previously suggested that the miRNA response element (MRE) in the 3′ untranslated region (UTR) of RNAs could be used to decipher a network of RNAs that are bound by a common set of miRNAs. RNAs within this network would function as competing endogenous RNAs (ceRNAs) that can regulate one another by competing for specific miRNAs. Using an integrated computer analysis and an experimental validation process that they termed mutually targeted MRE enrichment (MuTaME), Tay et al., identified a set of PTEN ceRNAs in prostate cancer and glioblastoma samples. As predicted, some of these ceRNAs are regulated by the same set of miRNAs that regulate PTEN and have similar expression profiles to PTEN. For example, knockdown of the ceRNAs VAPA or CNOT6L using small interfering RNAs (siRNAs) resulted in reduced expression levels of PTEN and conversely, expression of the ceRNA 3′ UTRs to which the miRNAs bind resulted in an increase in expression of PTEN 3′ UTR–luciferase constructs. Importantly, the link between PTEN, VAPA and

CNOT6 was lost in cells that had defective miRNA processing, indicating that miRNAs are crucial for these effects.

Pavel Sumazin, Xuerui Yang, Hua-Sheng Chiu, Andrea Califano and colleagues investigated the mRNA and miRNA network in glioblastoma cells. They found a surprisingly large post-translational regulatory network, involving some 7,000 RNAs that can function as miRNA sponges and 148 genes that affect miRNA–RNA interactions through non-sponge effects. In tumours that have an intact or heterozygously deleted PTEN locus, expression levels of the protein vary substantially, indicating that other modulators of expression are at work. Analysis of 13 genes that are frequently deleted in patients with glioma and that encode miRNA sponges that compete with PTEN in the RNA network showed that a change in their mRNA expression had a significant effect on the level of PTEN mRNA. Specifically, siRNA-mediated silencing of ten of the 13 genes reduced PTEN levels and substantially increased proliferation of glioblastoma cells. Conversely, expression of the PTEN 3′ UTR increased the expression of these 13 miRNA sponges.

These results indicate that reduced expression of a specific set of mRNAs can affect the expression of other RNAs that form part of an miRNA–mRNA network. Moreover, they hint at the subtlety of changes that could be occurring during tumorigenesis, in which a small reduction in the expression level of a few mRNAs could have wide-ranging effects.

The view that cancer is purely a genetic disease has taken a battering over recent years, perhaps most extensively from the recent discovery that between transcription and translation sits a whole host of regulatory RNAs, chiefly in the guise of microRNAs (miRNAs). Now, we can add yet another layer of regulation: the evidence from three papers that protein-coding and non-coding RNAs influence the interaction of miRNAs with their target RNAs.

Pier Paolo Pandolfi and colleagues had previously suggested that the miRNA response element (MRE) in the 3′ untranslated region (UTR) of RNAs could be used to decipher a network of RNAs that are bound by a common set of miRNAs. RNAs within this network would function as competing endogenous RNAs (ceRNAs) that can regulate one another by competing for specific miRNAs. Using an integrated computer analysis and an experimental validation process that they termed mutually targeted MRE enrichment (MuTaME), Tay et al., identified a set of PTEN ceRNAs in prostate cancer and glioblastoma samples. As predicted, some of these ceRNAs are regulated by the same set of miRNAs that regulate PTEN and have similar expression profiles to PTEN. For example, knockdown of the ceRNAs VAPA or CNOT6L using small interfering RNAs (siRNAs) resulted in reduced expression levels of PTEN and conversely, expression of the ceRNA 3′ UTRs to which the miRNAs bind resulted in an increase in expression of PTEN 3′ UTR–luciferase constructs. Importantly, the link between PTEN, VAPA and

CNOT6 was lost in cells that had defective miRNA processing, indicating that miRNAs are crucial for these effects.

Pavel Sumazin, Xuerui Yang, Hua-Sheng Chiu, Andrea Califano and colleagues investigated the mRNA and miRNA network in glioblastoma cells. They found a surprisingly large post-translational regulatory network, involving some 7,000 RNAs that can function as miRNA sponges and 148 genes that affect miRNA–RNA interactions through non-sponge effects. In tumours that have an intact or heterozygously deleted PTEN locus, expression levels of the protein vary substantially, indicating that other modulators of expression are at work. Analysis of 13 genes that are frequently deleted in patients with glioma and that encode miRNA sponges that compete with PTEN in the RNA network showed that a change in their mRNA expression had a significant effect on the level of PTEN mRNA. Specifically, siRNA-mediated silencing of ten of the 13 genes reduced PTEN levels and substantially increased proliferation of glioblastoma cells. Conversely, expression of the PTEN 3′ UTR increased the expression of these 13 miRNA sponges.

These results indicate that reduced expression of a specific set of mRNAs can affect the expression of other RNAs that form part of an miRNA–mRNA network. Moreover, they hint at the subtlety of changes that could be occurring during tumorigenesis, in which a small reduction in the expression level of a few mRNAs could have wide-ranging effects.

Saturday, 19 November 2011

The Human Genome Project, Then and Now

An early advocate of the sequencing of the human genome reflects on his own predictions from 1986.

By Walter F. Bodmer

In The Scientist’s first issue, Walter Bodmer, then Research Director at the Imperial Cancer Research Fund Laboratories in London, and later the second president of the Human Genome Organisation, wrote an opinion about the potential of a Human Genome Project (HGP). Now, more than a decade after the first draft genome was published, he reflects on the accuracy of those 1986 predictions.

In 1986 Bodmer predicted: the human genome would allow the characterization of ″…10,000 or so basic genetic functions…″

In 2011 Bodmer says: “The ’10,000 or so basic genetic functions’ were not to be equated to genes, but to clusters of genes with related functions, and was not far off the mark. Now, however, we know that multiple splice products and considerable numbers of nonprotein coding, yet functional, sequences greatly extend the potential complexity of the human genome beyond the bare count of some 20,000–25,000 genes.”

1986: ″Given a knowledge of the complete human gene sequence, there is no limit to the possibilities for analyzing and understanding…essentially all the major human chronic diseases…″

2011: “Now, with next-generation sequencing, one can even identify a mutant gene in a single appropriate family.”

1986: “The project will provide information of enormous interest for unraveling of the evolutionary relationships between gene products within and between species, and will reveal the control language for complex patterns of differential gene expression during development and differentiation.”

2011: “This has been achieved because, as expected, the HGP generated a huge amount of information on other genomes. However, only now is the challenge of the genetics of normal variation, including, for example, in facial features which are clearly almost entirely genetically determined, being met. Next, perhaps, will come the objective genetic analysis of human behavior.”

1986: ″The major challenge is to coordinate activities of scientists working in this field worldwide.″

2011: “Collaboration has proved to be fundamental to the success of the Human Genome Project, which set the stage for global cooperation and, most importantly, open exchange and availability of new DNA sequences, and, more generally, large data bases of information.”

1986: “The project will include…development of approaches for handling large genetic databases.”

2011: “As predicted, there have been major developments in the ability to handle large databases. Accompanying this have been new approaches to data analysis using, for example, Markov Chain Monte Carlo simulations that are hugely computer intensive.

The most surprising, and certainly not predicted development, has been the extraordinary rate of advance first in the techniques for large scale automated genotyping, then in whole genome mRNA analysis and finally in DNA sequencing where the rate of reduction in cost and increase in speed of DNA sequencing has exceeded all expectations and has even exceeded the rate of developments in computing. This has, for example, made population based whole genome sequencing more or less a reality.

Perhaps one of the greatest future technological challenges will be to apply these techniques to single cells and to achieve a comparable level of sophistication of cellular analysis, to that we now have for working with DNA, RNA, and proteins.”

1986: “We should call it Project 2000.”

2011: “This prediction also came to pass, with a little political license. I am referring to the fact that Bill Clinton and Tony Blair announced the completion of the project on 26th June 2000, while the first proper publication of a (very) rough draft was not till February 2001 and it took several more years until a fully reliable complete sequence became available. The public announcement was no doubt politically motivated with, I am sure, support from the scientists, to promote what was being done and have something to say at the beginning of the new millennium. An intriguing interaction between science and politics, that in contrast for example to the Lysenko episode in the Soviet union, was quite harmless and may even have been of benefit.

By Walter F. Bodmer

In The Scientist’s first issue, Walter Bodmer, then Research Director at the Imperial Cancer Research Fund Laboratories in London, and later the second president of the Human Genome Organisation, wrote an opinion about the potential of a Human Genome Project (HGP). Now, more than a decade after the first draft genome was published, he reflects on the accuracy of those 1986 predictions.

In 1986 Bodmer predicted: the human genome would allow the characterization of ″…10,000 or so basic genetic functions…″

In 2011 Bodmer says: “The ’10,000 or so basic genetic functions’ were not to be equated to genes, but to clusters of genes with related functions, and was not far off the mark. Now, however, we know that multiple splice products and considerable numbers of nonprotein coding, yet functional, sequences greatly extend the potential complexity of the human genome beyond the bare count of some 20,000–25,000 genes.”

1986: ″Given a knowledge of the complete human gene sequence, there is no limit to the possibilities for analyzing and understanding…essentially all the major human chronic diseases…″

2011: “Now, with next-generation sequencing, one can even identify a mutant gene in a single appropriate family.”

1986: “The project will provide information of enormous interest for unraveling of the evolutionary relationships between gene products within and between species, and will reveal the control language for complex patterns of differential gene expression during development and differentiation.”

2011: “This has been achieved because, as expected, the HGP generated a huge amount of information on other genomes. However, only now is the challenge of the genetics of normal variation, including, for example, in facial features which are clearly almost entirely genetically determined, being met. Next, perhaps, will come the objective genetic analysis of human behavior.”

1986: ″The major challenge is to coordinate activities of scientists working in this field worldwide.″

2011: “Collaboration has proved to be fundamental to the success of the Human Genome Project, which set the stage for global cooperation and, most importantly, open exchange and availability of new DNA sequences, and, more generally, large data bases of information.”

1986: “The project will include…development of approaches for handling large genetic databases.”

2011: “As predicted, there have been major developments in the ability to handle large databases. Accompanying this have been new approaches to data analysis using, for example, Markov Chain Monte Carlo simulations that are hugely computer intensive.

The most surprising, and certainly not predicted development, has been the extraordinary rate of advance first in the techniques for large scale automated genotyping, then in whole genome mRNA analysis and finally in DNA sequencing where the rate of reduction in cost and increase in speed of DNA sequencing has exceeded all expectations and has even exceeded the rate of developments in computing. This has, for example, made population based whole genome sequencing more or less a reality.

Perhaps one of the greatest future technological challenges will be to apply these techniques to single cells and to achieve a comparable level of sophistication of cellular analysis, to that we now have for working with DNA, RNA, and proteins.”

1986: “We should call it Project 2000.”

2011: “This prediction also came to pass, with a little political license. I am referring to the fact that Bill Clinton and Tony Blair announced the completion of the project on 26th June 2000, while the first proper publication of a (very) rough draft was not till February 2001 and it took several more years until a fully reliable complete sequence became available. The public announcement was no doubt politically motivated with, I am sure, support from the scientists, to promote what was being done and have something to say at the beginning of the new millennium. An intriguing interaction between science and politics, that in contrast for example to the Lysenko episode in the Soviet union, was quite harmless and may even have been of benefit.

Monday, 17 October 2011

What’s wrong with correlative experiments?

Here, we make a case for multivariate measurements in cell biology with minimal perturbation. We discuss how correlative data can identify cause-effect relationships in cellular pathways with potentially greater accuracy than conventional perturbation studies.

Marco Vilela and Gaudenz Danuser

How often do reviews for a paper contain “unfortunately, the link between these data is correlative”? As authors, we fear this critique; it is either the death sentence for a manuscript or, with a more forgiving editor, it is the beginning of a long series of new experiments. So, why is a correlative link considered weak? One common answer is that correlated observations can equally represent a cause-effect relation between two interacting components (A causes B causes C) or a common-cause relation between two independent components (B and C are both caused by A). Although this is a valid concern, the root of the problem lies in the unclear definition of what causation actually means. Thus, it becomes a subjective measure. Even mathematicians, whose job is to bring formalism to science, are still engaged in a vigorous debate of how to define causation.

Cell biologists usually use perturbations of pathways to establish cause-effect relationships. We break the system and then conclude that the perturbed pathway component is responsible for the difference we observe relative to the behaviour of the unperturbed system; for example, tens of thousands of studies have derived the function of a protein from the phenotype produced by its knockdown. Although this approach has led to immensely valuable models of cellular pathways, it has its limitations: first, the approach is again correlative, at best. All it does is correlate the intervention with the shifts in system behaviour. Second, this correlation is relatively easy to interpret in a linear relation between the perturbed component and the measured system parameter. However, nonlinearities in the system complicate the analysis of the outcome. Third, system adaptations and side effects in response to interventions are concerns. If there is a correlation between an intervention and the behaviour of an altered system, how can we be sure that it is indeed related to the perturbed component? Strictly, we can only conclude that we observe the system behaviour in the absence of the intact component. However, it is exceedingly difficult to infer how the targeted component contributed to the unperturbed system behaviour. Of course, we do controls to address this issue. For example, we pair knockdown of a protein with its overexpression, or carry out rescue experiments of mutants. But how often are conclusions drawn with imperfect controls? On the bright side, many powerful tools are emerging with the capability to perturb pathways specifically, acutely and locally. Although this will not remedy the ambiguities of interpreting results from interventions in nonlinear systems, it will greatly reduce the risk of system adaptation.

Read more: Nature Cell Biology Volume: 13, Page: 1011 (2011)

Marco Vilela and Gaudenz Danuser

How often do reviews for a paper contain “unfortunately, the link between these data is correlative”? As authors, we fear this critique; it is either the death sentence for a manuscript or, with a more forgiving editor, it is the beginning of a long series of new experiments. So, why is a correlative link considered weak? One common answer is that correlated observations can equally represent a cause-effect relation between two interacting components (A causes B causes C) or a common-cause relation between two independent components (B and C are both caused by A). Although this is a valid concern, the root of the problem lies in the unclear definition of what causation actually means. Thus, it becomes a subjective measure. Even mathematicians, whose job is to bring formalism to science, are still engaged in a vigorous debate of how to define causation.

Cell biologists usually use perturbations of pathways to establish cause-effect relationships. We break the system and then conclude that the perturbed pathway component is responsible for the difference we observe relative to the behaviour of the unperturbed system; for example, tens of thousands of studies have derived the function of a protein from the phenotype produced by its knockdown. Although this approach has led to immensely valuable models of cellular pathways, it has its limitations: first, the approach is again correlative, at best. All it does is correlate the intervention with the shifts in system behaviour. Second, this correlation is relatively easy to interpret in a linear relation between the perturbed component and the measured system parameter. However, nonlinearities in the system complicate the analysis of the outcome. Third, system adaptations and side effects in response to interventions are concerns. If there is a correlation between an intervention and the behaviour of an altered system, how can we be sure that it is indeed related to the perturbed component? Strictly, we can only conclude that we observe the system behaviour in the absence of the intact component. However, it is exceedingly difficult to infer how the targeted component contributed to the unperturbed system behaviour. Of course, we do controls to address this issue. For example, we pair knockdown of a protein with its overexpression, or carry out rescue experiments of mutants. But how often are conclusions drawn with imperfect controls? On the bright side, many powerful tools are emerging with the capability to perturb pathways specifically, acutely and locally. Although this will not remedy the ambiguities of interpreting results from interventions in nonlinear systems, it will greatly reduce the risk of system adaptation.

Read more: Nature Cell Biology Volume: 13, Page: 1011 (2011)

Tuesday, 6 September 2011

Vive la Différence

Measuring how individual cells differ from each other will enhance the predictive power of biology.

By H. Steven Wiley

Science is all about prediction. Its power lies in the ability to make highly specific forecasts about the world. The traditional “hard” sciences, such as chemistry and physics, use mathematics as the basis of their predictive power. Biology sometimes uses math to predict small-scale phenomena, but the complexity of cells, tissues, and organisms does not lend itself to easy capture by equations.

Systems biologists have been struggling to develop new mathematical and computational approaches to begin moving biology from a mostly descriptive to a predictive science. To be most useful, these models need to tell us not only why different types of cells can behave in much the same way, but also why seemingly identical cells can behave so differently.

Cell biologists typically want to define the commonalities among cells, not the differences. To this end, experiments are frequently performed on many different types of cultured cells to derive qualitative answers, such as whether a specific treatment activates a particular signaling pathway or causes the cells to migrate or proliferate. These types of experiments can delineate general rules of cell behavior, but to build models of how the rules actually work requires focusing on a single cell type and also making precise measurements at the level of individual cells.

Computational models of individual cells assume that the cell expresses specific activity levels of proteins or other molecules. Unfortunately, even within one cell type, the parameters and values used to build these models are almost always obtained from populations of cells in which each member has a slightly different composition. Variation in protein levels between cells gives rise to different responses, but if you are only measuring an average, you can’t tell whether all of the cells are responding halfway, or whether half of the cells are responding all the way.

The systems biology community is starting to recognize the problems associated with population-based measurements, and single-cell measurements are becoming more common. Although currently limited in the number of parameters that can be measured at once, such studies have provided insights into the extent to which seemingly identical cells can vary. Interestingly, studies of bacterial populations have shown that some processes are designed to vary wildly from one cell to another as a way for the microbe to “hedge its bets.” By trying out various survival strategies at once, a population can ensure that at least a fraction of its gene pool will survive an unexpected change in conditions.

Variations in the responses of cells within multicellular organisms are obviously under much tighter control than in free-living unicellular organisms, for no other reason than the need to precisely coordinate the behavior of cells during development. A rich area of current research in systems biology is how negative feedback loops can serve to reduce cell-cell variations and how a breakdown in the tight regulation of a cell’s response can lead to cancer and other diseases.

Despite the newfound appreciation for the need to consider cell-cell variations in building accurate models, more attention should be given to the network databases that serve as the foundation for these models. Unfortunately, data from different cell types are typically fed into those databases with no regard to their source. Models built from these amalgamated data sets result in a Frankenstein cell that could never actually exist. An oft-cited, large-scale signaling model for the EGF receptor pathway was assembled from bits of lymphocytes, fibroblasts, neurons, and epithelial cells. Yikes!

Using well-characterized model cell systems such as yeast to build predictive models is a good first step, but will not get us where we need to be with respect to predicting the drug response of cancer cells or understanding the mechanism of type 2 diabetes, because of the simplicity of the models’ intercellular interactions.

No one model system can be ideal for all problems in systems biology, but the current practice of pooling data from multiple types of cells to build specific models is absurd. We need to agree on a few well-characterized cell types to provide data for reference models. And we need to expect and embrace differences among cells—both between and within types—as revealing fundamental insights into the regulation of biological responses.

By H. Steven Wiley

Science is all about prediction. Its power lies in the ability to make highly specific forecasts about the world. The traditional “hard” sciences, such as chemistry and physics, use mathematics as the basis of their predictive power. Biology sometimes uses math to predict small-scale phenomena, but the complexity of cells, tissues, and organisms does not lend itself to easy capture by equations.

Systems biologists have been struggling to develop new mathematical and computational approaches to begin moving biology from a mostly descriptive to a predictive science. To be most useful, these models need to tell us not only why different types of cells can behave in much the same way, but also why seemingly identical cells can behave so differently.

Cell biologists typically want to define the commonalities among cells, not the differences. To this end, experiments are frequently performed on many different types of cultured cells to derive qualitative answers, such as whether a specific treatment activates a particular signaling pathway or causes the cells to migrate or proliferate. These types of experiments can delineate general rules of cell behavior, but to build models of how the rules actually work requires focusing on a single cell type and also making precise measurements at the level of individual cells.

Computational models of individual cells assume that the cell expresses specific activity levels of proteins or other molecules. Unfortunately, even within one cell type, the parameters and values used to build these models are almost always obtained from populations of cells in which each member has a slightly different composition. Variation in protein levels between cells gives rise to different responses, but if you are only measuring an average, you can’t tell whether all of the cells are responding halfway, or whether half of the cells are responding all the way.

The systems biology community is starting to recognize the problems associated with population-based measurements, and single-cell measurements are becoming more common. Although currently limited in the number of parameters that can be measured at once, such studies have provided insights into the extent to which seemingly identical cells can vary. Interestingly, studies of bacterial populations have shown that some processes are designed to vary wildly from one cell to another as a way for the microbe to “hedge its bets.” By trying out various survival strategies at once, a population can ensure that at least a fraction of its gene pool will survive an unexpected change in conditions.

Variations in the responses of cells within multicellular organisms are obviously under much tighter control than in free-living unicellular organisms, for no other reason than the need to precisely coordinate the behavior of cells during development. A rich area of current research in systems biology is how negative feedback loops can serve to reduce cell-cell variations and how a breakdown in the tight regulation of a cell’s response can lead to cancer and other diseases.

Despite the newfound appreciation for the need to consider cell-cell variations in building accurate models, more attention should be given to the network databases that serve as the foundation for these models. Unfortunately, data from different cell types are typically fed into those databases with no regard to their source. Models built from these amalgamated data sets result in a Frankenstein cell that could never actually exist. An oft-cited, large-scale signaling model for the EGF receptor pathway was assembled from bits of lymphocytes, fibroblasts, neurons, and epithelial cells. Yikes!

Using well-characterized model cell systems such as yeast to build predictive models is a good first step, but will not get us where we need to be with respect to predicting the drug response of cancer cells or understanding the mechanism of type 2 diabetes, because of the simplicity of the models’ intercellular interactions.

No one model system can be ideal for all problems in systems biology, but the current practice of pooling data from multiple types of cells to build specific models is absurd. We need to agree on a few well-characterized cell types to provide data for reference models. And we need to expect and embrace differences among cells—both between and within types—as revealing fundamental insights into the regulation of biological responses.

Tuesday, 16 August 2011

The Virtual Physiological Rat

The NIH awards $13 million to create a computer model of a lab rat.

By Jessica P. Johnson

Lab rats are finally catching a break. The Medical College of Wisconsin in Milwaukee has received a 5-year, $13 million grant to establish a National Center for Systems Biology, reports Newswise. The first task of the Center will be to create a virtual lab rat—a computer model that will gather all available research data for rats into one place.

By integrating the widespread data into a single model, researchers will be able to better understand rat physiology as a whole and to predict how multiple systems will interact in response to environmental and genetic causes of disease. They will also be able to input multiple causes at once and observe the virtual rat’s physiological response. The research will focus on diseases including hypertension, renal disease, heart failure, and metabolic syndromes.

“The Virtual Physiological Rat allows us to create a model for disease that takes into account the many genes and environmental factors believed to be associated,” Daniel Beard, a computational biologist and the principal investigator on the grant, said in a press release.

But rats aren’t completely off the hook. Once the computer model generates hypotheses about systemic response to disease, researchers will use the information to produce new knockout strains of rats to verify the model’s predictions.

By Jessica P. Johnson

Lab rats are finally catching a break. The Medical College of Wisconsin in Milwaukee has received a 5-year, $13 million grant to establish a National Center for Systems Biology, reports Newswise. The first task of the Center will be to create a virtual lab rat—a computer model that will gather all available research data for rats into one place.

By integrating the widespread data into a single model, researchers will be able to better understand rat physiology as a whole and to predict how multiple systems will interact in response to environmental and genetic causes of disease. They will also be able to input multiple causes at once and observe the virtual rat’s physiological response. The research will focus on diseases including hypertension, renal disease, heart failure, and metabolic syndromes.

“The Virtual Physiological Rat allows us to create a model for disease that takes into account the many genes and environmental factors believed to be associated,” Daniel Beard, a computational biologist and the principal investigator on the grant, said in a press release.

But rats aren’t completely off the hook. Once the computer model generates hypotheses about systemic response to disease, researchers will use the information to produce new knockout strains of rats to verify the model’s predictions.

Tuesday, 5 July 2011

RNA and DNA Sequence Differences

Researchers compared RNA sequences from human B cells of 27 individuals to the corresponding DNA sequences from the same individuals and uncovered more than 10,000 exonic sites where the RNA sequences do not match that of the DNA. These differences were nonrandom as many sites were found in multiple individuals and in different cell types, including primary skin cells and brain tissues. Using mass spectrometry, they detected peptides that are translated from the discordant RNA sequences and thus do not correspond exactly to the DNA sequences. They also compared the DNA and RNA sequences of the same individuals and found 28,766 events at over 10,000 exonic sites that differ between the RNA and the corresponding DNA sequence.

The underlying mechanisms for these events are largely unknown. For most of the cases, it is not known yet whether a different base was incorporated into the RNA during transcription or if these events occur posttranscriptionally. Anyway, these widespread RNA-DNA differences in the human transcriptome provide a yet unexplored aspect of genome variation.

Read more:

Science. 2011 Jul 1;333(6038):53-8.

The underlying mechanisms for these events are largely unknown. For most of the cases, it is not known yet whether a different base was incorporated into the RNA during transcription or if these events occur posttranscriptionally. Anyway, these widespread RNA-DNA differences in the human transcriptome provide a yet unexplored aspect of genome variation.

Read more:

Science. 2011 Jul 1;333(6038):53-8.

Wednesday, 29 June 2011

Molecular Cartography for Stem Cells

From Cell

Researchers have long suspected that cancer cells and stem cells share a core molecular signature, but this commonality remains incompletely understood. By examining microRNA (miRNA) expression patterns in multiple types of cancer cells and stem cells, Neveu et al. (2010) have now developed the miRMap, a tool that facilitates quantitative examination of the molecular hallmarks associated with a cell's identity. Their effort resulted in a catalog of expression patterns for nearly 330 miRNAs in over 50 different human cell lines. Despite the overwhelming molecular heterogeneity among the samples, their analysis identifies 11 miRNAs that comprise a signature common to all cancer cells and shared by a subset of pluripotent stem cells. The genes targeted by these miRNAs downregulate cell proliferation or upregulate the p53 network. Although recent reports have called attention to the inherent oncogenic risk of stem cells, this new study shows that only some pluripotent stem cells look like cancer cells, and this cancer-like state cannot be predicted by a stem cell's tissue of origin or method of production. By examining the changes associated with cellular reprogramming—both when stem cells are generated from somatic cells and when induced pluripotent stem cells (iPSCs) are differentiated—Neveu et al. reveal a precise window for cancer-like behavior during iPSC generation, emphasizing that a transient downregulation of p53, rather than a complete inhibition, is required to fully reprogram somatic cells into pluripotent stem cells. Multidimensional molecular maps, such as the miRMap, help us draw a more complete picture of the events that drive the acquisition of specific cell fates during cellular reprogramming.

P. Neveu et al. (2010). Cell Stem Cell 7, 671–681.

Researchers have long suspected that cancer cells and stem cells share a core molecular signature, but this commonality remains incompletely understood. By examining microRNA (miRNA) expression patterns in multiple types of cancer cells and stem cells, Neveu et al. (2010) have now developed the miRMap, a tool that facilitates quantitative examination of the molecular hallmarks associated with a cell's identity. Their effort resulted in a catalog of expression patterns for nearly 330 miRNAs in over 50 different human cell lines. Despite the overwhelming molecular heterogeneity among the samples, their analysis identifies 11 miRNAs that comprise a signature common to all cancer cells and shared by a subset of pluripotent stem cells. The genes targeted by these miRNAs downregulate cell proliferation or upregulate the p53 network. Although recent reports have called attention to the inherent oncogenic risk of stem cells, this new study shows that only some pluripotent stem cells look like cancer cells, and this cancer-like state cannot be predicted by a stem cell's tissue of origin or method of production. By examining the changes associated with cellular reprogramming—both when stem cells are generated from somatic cells and when induced pluripotent stem cells (iPSCs) are differentiated—Neveu et al. reveal a precise window for cancer-like behavior during iPSC generation, emphasizing that a transient downregulation of p53, rather than a complete inhibition, is required to fully reprogram somatic cells into pluripotent stem cells. Multidimensional molecular maps, such as the miRMap, help us draw a more complete picture of the events that drive the acquisition of specific cell fates during cellular reprogramming.

P. Neveu et al. (2010). Cell Stem Cell 7, 671–681.

Tuesday, 14 June 2011

Stem cells under attack

Effie Apostolou & Konrad Hochedlinger

From Nature

Induced pluripotent stem cells offer promise for patient-specific regenerative therapy. But a study now cautions that, even when immunologically matched, these cells can be rejected after transplantation.

In 2006, Takahashi and Yamanaka made a groundbreaking discovery. When they introduced four specific genes associated with embryonic development into adult mouse cells, the cells were reprogrammed to resemble embryonic stem cells (ES cells). They named these cells induced pluripotent stem cells (iPS cells). This approach does not require the destruction of embryos, and so assuaged the ethical concerns surrounding research on ES cells. What's more, researchers subsequently noted that the use of 'custom-made' adult cells derived from human iPS cells might ultimately allow the treatment of patients with debilitating degenerative disorders. Given that such cells' DNA is identical to that of the patient, it has been assumed — although never rigorously tested — that they wouldn't be attacked by the immune system. However, Zhao et al. show, in a mouse transplantation model, that some iPS cells are immunogenic, raising concerns about their therapeutic use.

Regardless of the answers to the outstanding questions, this and other recent studies reach one common conclusion: researchers must learn more about the mechanisms underlying cellular reprogramming and the inherent similarities and differences between ES cells and iPS cells. Only on careful examination of these issues can we know whether such differences pose an impediment to the potential therapeutic use of iPS cells, and how to address them. In any case, these findings should not affect the utility of iPS-cell technology for studying diseases and discovering drugs in vitro.

Nature Volume: 474, Pages: 165–166 Date published: 09 June 2011

From Nature

Induced pluripotent stem cells offer promise for patient-specific regenerative therapy. But a study now cautions that, even when immunologically matched, these cells can be rejected after transplantation.

In 2006, Takahashi and Yamanaka made a groundbreaking discovery. When they introduced four specific genes associated with embryonic development into adult mouse cells, the cells were reprogrammed to resemble embryonic stem cells (ES cells). They named these cells induced pluripotent stem cells (iPS cells). This approach does not require the destruction of embryos, and so assuaged the ethical concerns surrounding research on ES cells. What's more, researchers subsequently noted that the use of 'custom-made' adult cells derived from human iPS cells might ultimately allow the treatment of patients with debilitating degenerative disorders. Given that such cells' DNA is identical to that of the patient, it has been assumed — although never rigorously tested — that they wouldn't be attacked by the immune system. However, Zhao et al. show, in a mouse transplantation model, that some iPS cells are immunogenic, raising concerns about their therapeutic use.

Regardless of the answers to the outstanding questions, this and other recent studies reach one common conclusion: researchers must learn more about the mechanisms underlying cellular reprogramming and the inherent similarities and differences between ES cells and iPS cells. Only on careful examination of these issues can we know whether such differences pose an impediment to the potential therapeutic use of iPS cells, and how to address them. In any case, these findings should not affect the utility of iPS-cell technology for studying diseases and discovering drugs in vitro.

Nature Volume: 474, Pages: 165–166 Date published: 09 June 2011

Tuesday, 7 June 2011

Will you take the 'arsenic-life' test?

Erika Check Hayden

At first, it sounded like the discovery of the century: a bacterium that can survive by using the toxic element arsenic instead of phosphorus in its DNA and in other biomolecules.

But scientists have lined up to criticize the claim since it appeared in Science six months ago. Last week, the journal published a volley of eight technical comments summarizing the key objections to the original paper, along with a response from the authors, who stand by their work.

The authors of the original paper are also offering to distribute samples of the bacterium, GFAJ-1, so that others can attempt to replicate their work. The big question is whether researchers will grab the opportunity to test such an eye-popping claim or, as some are already saying, they will reject as a waste of time the chance to repeat work they believe is fundamentally flawed. "I have not found anybody outside of that laboratory who supports the work," says Barry Rosen of Florida International University in Miami, who published an earlier critique of the paper.

Some are also frustrated that the authors did not release any new data in their response, despite having had ample time to conduct follow-up experiments of their own to bolster their case. "I'm tired of rehashing these preliminary data," says John Helmann of Cornell University in Ithaca, New York, who critiqued the work in January on the Faculty of 1000 website. "I look forward to the time when they or others in the field start doing the sort of rigorous experiments that need to be done to test this hypothesis."

The original study, led by Felisa Wolfe-Simon, a NASA astrobiology research fellow at the US Geological Survey in Menlo Park, California, looked at bacteria taken from the arsenic-rich Mono Lake in southern California. The authors grew the bacteria in their lab using a medium that contained arsenic but no phosphorus. Even without this essential element of life, the bacteria reproduced and integrated arsenic into their DNA to replace the missing phosphorus, the paper reported.

"We maintain that our interpretation of As [arsenic] substitution, based on multiple congruent lines of evidence, is viable," Wolfe-Simon and her colleagues wrote in last week's response.

But critics have pointed out that the growth medium contained trace amounts of phosphorus — enough to support a few rounds of bacterial growth. They also note that the culturing process could have helped arsenic-tolerant bacteria to survive by killing off less well-equipped microbes.

Others say that there is simply not enough evidence that arsenic atoms were incorporated into the bacterium's DNA. The chemical instability of arsenate relative to phosphate makes this an extraordinary claim that would "set aside nearly a century of chemical data concerning arsenate and phosphate molecules", writes Steven Benner4 of the Foundation for Applied Molecular Evolution in Gainesville, Florida.

A leading critic of the work, Rosemary Redfield of the University of British Columbia in Vancouver, Canada, says that it would be "relatively straightforward" to grow the bacteria in arsenic-containing media and then analyse them using mass spectrometry to test whether arsenic is covalently bonded into their DNA backbone.

Redfield says that she will probably get samples of GFAJ-1 to run these follow-up tests, and hopes that a handful of other laboratories will collaborate to repeat the experiments independently and publish their results together.

But some principal investigators are reluctant to spend their resources, and their students' time, replicating the work. "If you extended the results to show there is no detectable arsenic, where could you publish that?" asks Simon Silver of the University of Illinois at Chicago. "How could the young person who was asked to do that work ever get a job?"

Helmann says that he is in the process of installing a highly sensitive mass spectrometer that can measure trace quantities of elements, which could help refute or corroborate the findings. But the equipment would be better employed on original research, he says. "I've got my own science to do."

At first, it sounded like the discovery of the century: a bacterium that can survive by using the toxic element arsenic instead of phosphorus in its DNA and in other biomolecules.

But scientists have lined up to criticize the claim since it appeared in Science six months ago. Last week, the journal published a volley of eight technical comments summarizing the key objections to the original paper, along with a response from the authors, who stand by their work.

The authors of the original paper are also offering to distribute samples of the bacterium, GFAJ-1, so that others can attempt to replicate their work. The big question is whether researchers will grab the opportunity to test such an eye-popping claim or, as some are already saying, they will reject as a waste of time the chance to repeat work they believe is fundamentally flawed. "I have not found anybody outside of that laboratory who supports the work," says Barry Rosen of Florida International University in Miami, who published an earlier critique of the paper.

Some are also frustrated that the authors did not release any new data in their response, despite having had ample time to conduct follow-up experiments of their own to bolster their case. "I'm tired of rehashing these preliminary data," says John Helmann of Cornell University in Ithaca, New York, who critiqued the work in January on the Faculty of 1000 website. "I look forward to the time when they or others in the field start doing the sort of rigorous experiments that need to be done to test this hypothesis."

The original study, led by Felisa Wolfe-Simon, a NASA astrobiology research fellow at the US Geological Survey in Menlo Park, California, looked at bacteria taken from the arsenic-rich Mono Lake in southern California. The authors grew the bacteria in their lab using a medium that contained arsenic but no phosphorus. Even without this essential element of life, the bacteria reproduced and integrated arsenic into their DNA to replace the missing phosphorus, the paper reported.

"We maintain that our interpretation of As [arsenic] substitution, based on multiple congruent lines of evidence, is viable," Wolfe-Simon and her colleagues wrote in last week's response.

But critics have pointed out that the growth medium contained trace amounts of phosphorus — enough to support a few rounds of bacterial growth. They also note that the culturing process could have helped arsenic-tolerant bacteria to survive by killing off less well-equipped microbes.

Others say that there is simply not enough evidence that arsenic atoms were incorporated into the bacterium's DNA. The chemical instability of arsenate relative to phosphate makes this an extraordinary claim that would "set aside nearly a century of chemical data concerning arsenate and phosphate molecules", writes Steven Benner4 of the Foundation for Applied Molecular Evolution in Gainesville, Florida.

A leading critic of the work, Rosemary Redfield of the University of British Columbia in Vancouver, Canada, says that it would be "relatively straightforward" to grow the bacteria in arsenic-containing media and then analyse them using mass spectrometry to test whether arsenic is covalently bonded into their DNA backbone.

Redfield says that she will probably get samples of GFAJ-1 to run these follow-up tests, and hopes that a handful of other laboratories will collaborate to repeat the experiments independently and publish their results together.

But some principal investigators are reluctant to spend their resources, and their students' time, replicating the work. "If you extended the results to show there is no detectable arsenic, where could you publish that?" asks Simon Silver of the University of Illinois at Chicago. "How could the young person who was asked to do that work ever get a job?"

Helmann says that he is in the process of installing a highly sensitive mass spectrometer that can measure trace quantities of elements, which could help refute or corroborate the findings. But the equipment would be better employed on original research, he says. "I've got my own science to do."

Tuesday, 24 May 2011

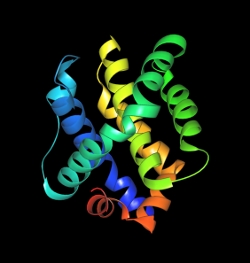

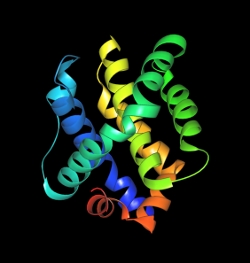

Scaffold Proteins: Hubs for Controlling the Flow of Cellular Information

Matthew C. Good et al.

From Science

Mammalian cells contain an estimated 1 billion individual protein molecules, with as many as 10% of these involved in signal transduction. Given this enormous number of molecules, it seems remarkable that cells can accurately process the vast array of signaling information they constantly receive. How can signaling proteins find their correct partners - and avoid their incorrect partners - among so many other proteins?

A principle that has emerged over the past two decades is that cells achieve specificity in their molecular signaling networks by organizing discrete subsets of proteins in space and time. For example, functionally interacting signaling components can be sequestered into specific subcellular compartments (e.g., organelles) or at the plasma membrane. Another solution is to assemble functionally interacting proteins into specific complexes. More than 15 years ago, the first scaffold proteins were discovered—proteins that coordinate the physical assembly of components of a signaling pathway or network. These proteins have captured the attention of the signaling field because they appear to provide a simple and elegant solution for determining the specificity of information flow in intracellular networks.

Scaffold Proteins: Versatile Tools to Assemble Diverse Pathways

Scaffolds are extremely diverse proteins,many of which are likely to have evolved independently. Nonetheless they are conceptually related, in that they are usually composed of multiple modular interaction domains or motifs. Their exact domain composition and order, however, can vary widely depending on the pathways that they organize. In some cases, homologous individual interaction motifs can be found in scaffolds associated with particular signaling proteins. For example, the AKAPs (A- kinase anchoring proteins),which link protein kinase A (PKA) to diverse signaling processes, all share a common short peptide motif that binds to the regulatory subunit of PKA. However, the other domains in individual AKAPs are highly variable, depending on what inputs and outputs the scaffold protein coordinates with PKA. Thus, scaffold proteins are flexible platforms assembled through mixing and matching of interaction domains.

Scaffold proteins function in a diverse array of biological processes. Simple mechanisms (such as tethering) are layered with more sophisticated mechanisms (such as allosteric control) so that scaffolds can precisely control the specificity and dynamics of information transfer. Scaffold proteins can also control the wiring of more complex network configurations—they can integrate feedback loops and regulatory controls to generate precisely controlled signaling behaviors. The versatility of scaffold proteins comes from their modularity, which allows recombination of protein interaction domains to generate new signaling pathways. Cells use scaffolds to diversify signaling behaviors and to evolve new responses. Pathogens can create scaffold proteins that are to their advantage: Their virulence depends on rewiring host signaling pathways to turn off or avoid host defenses. In the lab, scaffolds are being used to build new, predictable signaling or metabolic networks to program useful cellular behaviors.

From Science

Mammalian cells contain an estimated 1 billion individual protein molecules, with as many as 10% of these involved in signal transduction. Given this enormous number of molecules, it seems remarkable that cells can accurately process the vast array of signaling information they constantly receive. How can signaling proteins find their correct partners - and avoid their incorrect partners - among so many other proteins?

A principle that has emerged over the past two decades is that cells achieve specificity in their molecular signaling networks by organizing discrete subsets of proteins in space and time. For example, functionally interacting signaling components can be sequestered into specific subcellular compartments (e.g., organelles) or at the plasma membrane. Another solution is to assemble functionally interacting proteins into specific complexes. More than 15 years ago, the first scaffold proteins were discovered—proteins that coordinate the physical assembly of components of a signaling pathway or network. These proteins have captured the attention of the signaling field because they appear to provide a simple and elegant solution for determining the specificity of information flow in intracellular networks.

Scaffold Proteins: Versatile Tools to Assemble Diverse Pathways

Scaffolds are extremely diverse proteins,many of which are likely to have evolved independently. Nonetheless they are conceptually related, in that they are usually composed of multiple modular interaction domains or motifs. Their exact domain composition and order, however, can vary widely depending on the pathways that they organize. In some cases, homologous individual interaction motifs can be found in scaffolds associated with particular signaling proteins. For example, the AKAPs (A- kinase anchoring proteins),which link protein kinase A (PKA) to diverse signaling processes, all share a common short peptide motif that binds to the regulatory subunit of PKA. However, the other domains in individual AKAPs are highly variable, depending on what inputs and outputs the scaffold protein coordinates with PKA. Thus, scaffold proteins are flexible platforms assembled through mixing and matching of interaction domains.

Scaffold proteins function in a diverse array of biological processes. Simple mechanisms (such as tethering) are layered with more sophisticated mechanisms (such as allosteric control) so that scaffolds can precisely control the specificity and dynamics of information transfer. Scaffold proteins can also control the wiring of more complex network configurations—they can integrate feedback loops and regulatory controls to generate precisely controlled signaling behaviors. The versatility of scaffold proteins comes from their modularity, which allows recombination of protein interaction domains to generate new signaling pathways. Cells use scaffolds to diversify signaling behaviors and to evolve new responses. Pathogens can create scaffold proteins that are to their advantage: Their virulence depends on rewiring host signaling pathways to turn off or avoid host defenses. In the lab, scaffolds are being used to build new, predictable signaling or metabolic networks to program useful cellular behaviors.

Monday, 16 May 2011

Multitasking Drugs

Paula A. Kiberstis

The escalating cost of developing new drugs has reinvigorated interest in “drug repositioning,” the idea that a drug with a good track record for clinical safety and efficacy in treating one disease might have broader clinical applications, some of which would not easily be predicted from the drug's mechanism of action. This concept is illustrated by two recent studies that propose that drugs developed for cardiovascular disease might offer beneficial effects in the setting of prostate cancer.

Farwell et al. suggest that statins (cholesterol-lowering drugs) merit serious consideration as a possible preventive strategy for prostate cancer. Building on earlier work on this topic, they found in a study of medical files of over 55,000 men that those who had been prescribed statins were 31% less likely to be diagnosed with prostate cancer than those who had been prescribed another type of medication (antihypertensives). In independent work, Platz et al. screened for agents that inhibit the growth of prostate cancer cells and found that one of the most effective was digoxin, a drug used to treat heart failure and arrhythmia. A complementary epidemiological analysis of about 48,000 men revealed that digoxin use was associated with a 25% lower risk of prostate cancer, leading the authors to suggest that this drug be further studied as a possible therapeutic for the disease.

J. Natl. Cancer Inst. 103, 1 (2011); Cancer Discovery 1, OF66 (2011).

The escalating cost of developing new drugs has reinvigorated interest in “drug repositioning,” the idea that a drug with a good track record for clinical safety and efficacy in treating one disease might have broader clinical applications, some of which would not easily be predicted from the drug's mechanism of action. This concept is illustrated by two recent studies that propose that drugs developed for cardiovascular disease might offer beneficial effects in the setting of prostate cancer.

Farwell et al. suggest that statins (cholesterol-lowering drugs) merit serious consideration as a possible preventive strategy for prostate cancer. Building on earlier work on this topic, they found in a study of medical files of over 55,000 men that those who had been prescribed statins were 31% less likely to be diagnosed with prostate cancer than those who had been prescribed another type of medication (antihypertensives). In independent work, Platz et al. screened for agents that inhibit the growth of prostate cancer cells and found that one of the most effective was digoxin, a drug used to treat heart failure and arrhythmia. A complementary epidemiological analysis of about 48,000 men revealed that digoxin use was associated with a 25% lower risk of prostate cancer, leading the authors to suggest that this drug be further studied as a possible therapeutic for the disease.

J. Natl. Cancer Inst. 103, 1 (2011); Cancer Discovery 1, OF66 (2011).

Saturday, 14 May 2011

Complex networks: Degrees of control

Magnus Egerstedt

Networks can be found all around us. Examples include social networks (both online and offline), mobile sensor networks and gene regulatory networks. Such constructs can be represented by nodes and by edges (connections) between the nodes. The nodes are individual decision makers, for instance people on the social-networking website Facebook or DNA segments in a cell. The edges are the means by which information flows and is shared between nodes. But how hard is it to control the behaviour of such complex networks?

The flow of information in a network is what enables the nodes to make decisions or to update internal states or beliefs — for example, an individual's political affiliation or the proteins being expressed in a cell. The result is a dynamic network, in which the nodes' states evolve over time. The overall behaviour of such a dynamic network depends on several factors: how the nodes make their decisions and update their states; what information is shared between the edges; and what the network itself looks like — that is, which nodes are connected by edges.

Imagine that you want to start a trend by influencing certain individuals in a social network, or that you want to propagate a drug through a biological system by injecting the drug at particular locations. Two obvious questions are: which nodes should you pick, and how effective are these nodes when it comes to achieving the desired overall behaviour? If the only important factor is the overall spread of information, these questions are related to the question of finding and characterizing effective decision-makers. However, the nodes' dynamics (how information is used for updating the internal states) and the information flow (what information is actually shared) must also be taken into account.

Central to the question of how information, injected at certain key locations, can be used to steer the overall system towards some desired performance is the notion of controllability — a measure of what states can be achieved from a given set of initial states. Different dynamical systems have different levels of controllability. For example, a car without a steering wheel cannot reach the same set of states as a car with one, and, as a consequence, is less controllable.

Researchers found that, for several types of network, controllability is connected to a network's underlying structure. They identified what driver nodes — those into which control inputs are injected — can direct the network to a given behaviour. The surprising result is that driver nodes tend to avoid the network hubs. In other words, centrally located nodes are not necessarily the best ones for influencing a network's performance. So for social networks, for example, the most influential members may not be those with the most friends.

The result of this type of analysis is that it is possible to determine how many driver nodes are needed for complete control over a network. Scientists do this for several real networks, including gene regulatory networks for controlling cellular processes, large-scale data networks such as the World Wide Web, and social networks. We have a certain intuition about how hard it might be to control such networks. For instance, one would expect cellular processes to be designed to make them amenable to control so that they can respond swiftly to external stimuli, whereas one would expect social networks to be more likely to resist being controlled by a small number of driver nodes.

It turns out that this intuition is entirely wrong. Social networks are much easier to control than biological regulatory networks, in the sense that fewer driver nodes are needed to fully control them — that is, to take the networks from a given configuration to any desired configuration. Studies find that, to fully control a gene regulatory network, roughly 80% of the nodes should be driver nodes. By contrast, for some social networks only 20% of the nodes are required to be driver nodes. What's more, the authors show that engineered networks such as power grids and electronic circuits are overall much easier to control than social networks and those involving gene regulation. This is due to both the increased density of the interconnections (edges) and the homogeneous nature of the network structure.

These startling findings significantly further our understanding of the fundamental properties of complex networks. One implication of the study is that both social networks and naturally occurring networks, such as those involving gene regulation, are surprisingly hard to control. To a certain extent this is reassuring, because it means that such networks are fairly immune to hostile takeovers: a large fraction of the network's nodes must be directly controlled for the whole of it to change. By contrast, engineered networks are generally much easier to control, which may or may not be a good thing, depending on who is trying to control the network.

Read more

Networks can be found all around us. Examples include social networks (both online and offline), mobile sensor networks and gene regulatory networks. Such constructs can be represented by nodes and by edges (connections) between the nodes. The nodes are individual decision makers, for instance people on the social-networking website Facebook or DNA segments in a cell. The edges are the means by which information flows and is shared between nodes. But how hard is it to control the behaviour of such complex networks?

The flow of information in a network is what enables the nodes to make decisions or to update internal states or beliefs — for example, an individual's political affiliation or the proteins being expressed in a cell. The result is a dynamic network, in which the nodes' states evolve over time. The overall behaviour of such a dynamic network depends on several factors: how the nodes make their decisions and update their states; what information is shared between the edges; and what the network itself looks like — that is, which nodes are connected by edges.

Imagine that you want to start a trend by influencing certain individuals in a social network, or that you want to propagate a drug through a biological system by injecting the drug at particular locations. Two obvious questions are: which nodes should you pick, and how effective are these nodes when it comes to achieving the desired overall behaviour? If the only important factor is the overall spread of information, these questions are related to the question of finding and characterizing effective decision-makers. However, the nodes' dynamics (how information is used for updating the internal states) and the information flow (what information is actually shared) must also be taken into account.

Central to the question of how information, injected at certain key locations, can be used to steer the overall system towards some desired performance is the notion of controllability — a measure of what states can be achieved from a given set of initial states. Different dynamical systems have different levels of controllability. For example, a car without a steering wheel cannot reach the same set of states as a car with one, and, as a consequence, is less controllable.

Researchers found that, for several types of network, controllability is connected to a network's underlying structure. They identified what driver nodes — those into which control inputs are injected — can direct the network to a given behaviour. The surprising result is that driver nodes tend to avoid the network hubs. In other words, centrally located nodes are not necessarily the best ones for influencing a network's performance. So for social networks, for example, the most influential members may not be those with the most friends.

The result of this type of analysis is that it is possible to determine how many driver nodes are needed for complete control over a network. Scientists do this for several real networks, including gene regulatory networks for controlling cellular processes, large-scale data networks such as the World Wide Web, and social networks. We have a certain intuition about how hard it might be to control such networks. For instance, one would expect cellular processes to be designed to make them amenable to control so that they can respond swiftly to external stimuli, whereas one would expect social networks to be more likely to resist being controlled by a small number of driver nodes.

It turns out that this intuition is entirely wrong. Social networks are much easier to control than biological regulatory networks, in the sense that fewer driver nodes are needed to fully control them — that is, to take the networks from a given configuration to any desired configuration. Studies find that, to fully control a gene regulatory network, roughly 80% of the nodes should be driver nodes. By contrast, for some social networks only 20% of the nodes are required to be driver nodes. What's more, the authors show that engineered networks such as power grids and electronic circuits are overall much easier to control than social networks and those involving gene regulation. This is due to both the increased density of the interconnections (edges) and the homogeneous nature of the network structure.

These startling findings significantly further our understanding of the fundamental properties of complex networks. One implication of the study is that both social networks and naturally occurring networks, such as those involving gene regulation, are surprisingly hard to control. To a certain extent this is reassuring, because it means that such networks are fairly immune to hostile takeovers: a large fraction of the network's nodes must be directly controlled for the whole of it to change. By contrast, engineered networks are generally much easier to control, which may or may not be a good thing, depending on who is trying to control the network.

Read more

Thursday, 5 May 2011

If Bacteria Can Do It…

Learning community skills from microbes

By H. Steven Wiley

Numerically and by biomass, bacteria are the most successful organisms on Earth. Much of this success is due to their small size and relative simplicity, which allows for fast reproduction and correspondingly rapid evolution. But the price of small size and rapid growth is having a small genome, which constrains the diversity of metabolic functions that a single microbe can have. Thus, bacteria tend to be specialized for using just a few substrates. So how can simple bacteria thrive in a complex environment? By cooperating—a cooperation driven by need.

Bacteria rarely live in a given ecological niche by themselves. Instead, they exist in communities in which one bacterial species generates as waste the substrates another species needs to survive. Their waste products are used, in turn, by other bacterial species in a complex food chain. Survival requires balancing the needs of the individual with the well-being of the group, both within and across species. How this balancing act is orchestrated can be fascinating to explore as the relative roles of cooperation, opportunism, parasitism and competition change with alterations in available resources.

The dynamics of microbial behavior are not just a great demonstration of how the laws of natural selection work and how they depend on the nature of both selective pressures and environmental constraints. Microbial communities also demonstrate important nongenetic principles of cooperation. And herein lie lessons that scientists can emulate.

To be successful, scientists must be able to compete not only for funding, but for important research topics that will give them visibility and attract good students. In the earlier days of biology, questions were more general, making it easier to keep up with broad fields and to exploit novel research findings as they arose. As the nature of our work has become more complex and the amount of biological information has exploded, we have necessarily become more specialized. There is only so much information each of us can handle.

With specialization has come an increasing dependence on other specialized biologists to provide us with needed data and to support our submitted papers and grants. At the same time, resources have become scarcer, and we find ourselves competing with the same scientists on whom we are becoming dependent. Thus, it is necessary to find a balance between cooperation and competition in order to survive, and perhaps even to thrive.

The composition of microbial communities is driven by both the interaction of different species and external environmental factors that determine resource availability. Scientists want to learn the rules governing these complex relationships so they can reengineer bacterial communities for the production of useful substances, or for bioremediation. Perhaps as we learn the optimal strategies that microbial communities use to work together effectively, we will gain insights into how we can better work together as a community of scientists.

The Scientist

By H. Steven Wiley

Numerically and by biomass, bacteria are the most successful organisms on Earth. Much of this success is due to their small size and relative simplicity, which allows for fast reproduction and correspondingly rapid evolution. But the price of small size and rapid growth is having a small genome, which constrains the diversity of metabolic functions that a single microbe can have. Thus, bacteria tend to be specialized for using just a few substrates. So how can simple bacteria thrive in a complex environment? By cooperating—a cooperation driven by need.